ChatGPT's idea of a random number is 42. What should that tell you?

Does ChatGPT think like a human or a program?

42 is the answer

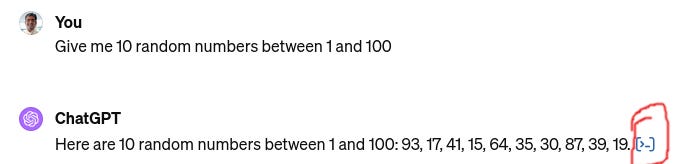

Leniolabs ran an experiment in which they asked ChatGPT 3.5-Turbo to pick a random number between 1 and 100, and here are the results:

The fact that the numbers are not random is not at all a surprise. And, of course, the most common answer is 42 because it is the The Answer to the Ultimate Question of Life, the Universe, and Everything.

Anand phrased the question thus: When picking a number between 1-100, do #LLMs pick randomly? Or pick like a human?

I think this is an important question and knowing the answer well is necessary to be able to use LLMs well without running into nasty surprises.

The short answer is: Of course, ChatGPT 3.5 will think like a human.

ChatGPT 3.5 is an LLM: meaning Large Language Model. All an LLM does is recognize patterns in their training data which is mostly examples of language use from all over the internet and Wikipedia and 20000 books and all research papers. So it is not a surprise that the picking is biased according to what's in the training data and it is not a surprise that 42 wins.

ChatGPT is a language model. You shouldn’t expect it to do maths. Most people are surprised that it makes dumb mistakes when given math problems. That should be expected considering that it is a language model. The fact that a language model is actually able to solve some of the simpler math problems should come as a surprise. (And it did, to the researchers and early users of LLMs.)

Can I get random numbers from ChatGPT?

This brings me to the really important question: If I wanted to generate random numbers which are not 42, can I use ChatGPT for it?

The answer is Yes!

But, as I’ve been saying for a while, you need to get the paid version: ChatGPT4.

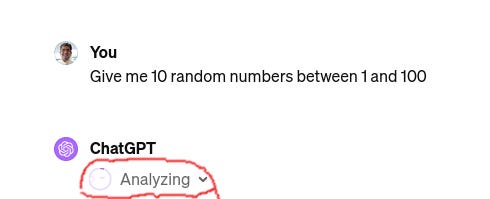

ChatGPT4 can write Python programs, run them, and use the output in its answers. And ChatGPT4 is smart enough to realize that when I ask it to generate a random number it should write a program.

Notice the “Analyzing ⌄”? That means that ChatGPT4 has just written a program and is running it.

And here’s the result. You’ll see a disappointing lack of 42s. (The “[>_]” at the end indicates that a program was used to generate this answer, and if you click on it, you’ll be shown the program and its output.

Can you explain why ChatGPT’s random numbers contain 42, 47, 72 etc?

Anand continues:

[ChatGPT3.5, Claude 3 Haiku, Gemini] all avoid multiples of 10 (10, 20, ...), repeated digits (11, 22, ...), single digits (1, 2, ...) and prefer 7-endings (27, 37, ...). These are clearly human #biases -- avoiding regular / round numbers and seeking 7 as "random".

Strangely, they all avoid numbers ending with 1, and like 72, 73 and 56 a lot. (I don't know why. Any guesses?)

It is possible to make educated guesses as to why LLMs behave like this. What Anand says about “avoiding regular / round numbers” is plausible and might even be true.

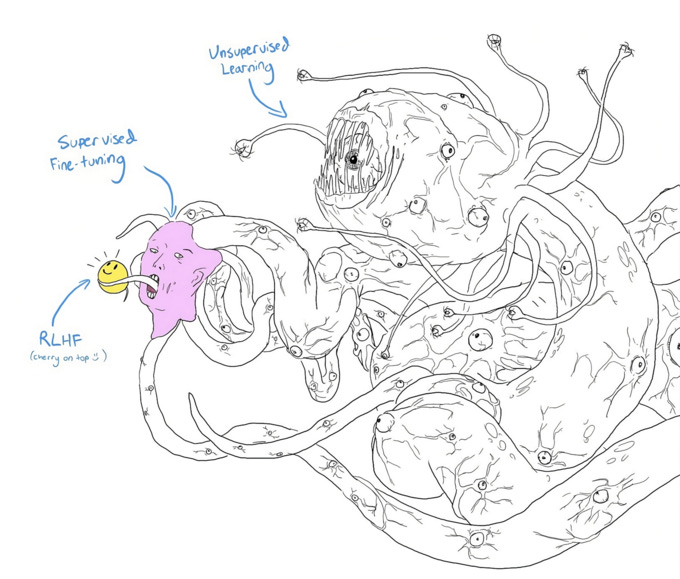

But it will be a long, long time before we can really understand an LLMs thought process. Because it is insanely complicated and insanely bizarre.

Let me illustrate with an example.

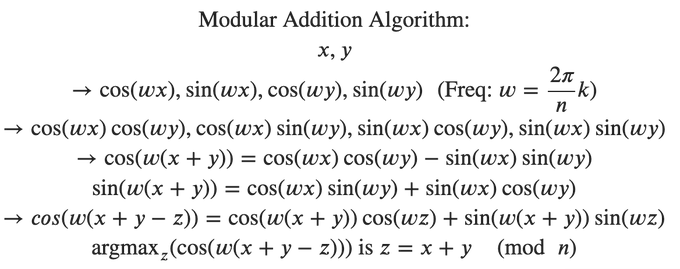

Researchers trying to understand how deep-learning neural networks (the technology underlying LLMs) built a tiny deep-learning model to do modular addition. Then, they spent a lot of time trying to figure out how it does this addition.

Before continuing, it would be helpful for you to understand modular addition. It is addition similar to what we do on a clock face: numbers above 12 loop back starting with 1. Hence in Modulo 12 addition 11+2 = 1 and 10 + 5 = 3. In Modulo 100 addition, 90 + 25 = 15. Basically, there’s a “maximum number” and whenever the answer goes above the maximum, we just take the remainder. For humans, the operation is quite simple.

But, that’s not how the neural network does it. The researchers found that it uses discrete Fourier transforms followed by trigonometric identities. The image below is the formula the “simple” neural network is using to compute modular addition:

Check out this article for more on why it is going to be very difficult to explain how or why an LLM behaves in a certain way.

Summary

LLMs think like humans. Because they’ve been trained on human thoughts and human text. And they will have human-like biases and make human-like mistakes.

Except if you’re using ChatGPT4 and it is choosing to use the “Advanced Data Analyzer” (i.e. writing a program to answer your question)

And no, nobody can explain why an LLM behaves in a certain way. Don’t make the mistake of thinking that you understand an LLM: it is a bizarre beast wearing a polite and helpful mask to make you feel comfortable